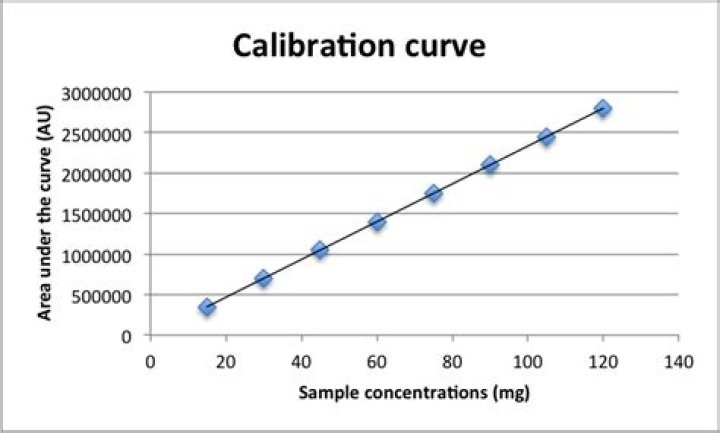

Why is a calibration curve linear?

Daniel Johnston

Daniel Johnston

The general reason for preferring linear calibration curve is that is simple and it makes LOD/LOQ calculations simple. Quadratic curves are not that uncommon in atomic absorption analysis. One can use them. Check the value of the first coefficient a in ax2+bx+c=0.

Why should a calibration curve be linear?

Linear calibration curves are desirable because they result in the best accuracy and precision. A plot of the calibration data and the fitted line should always be examined to check for outliers and to verify linear behavior.Do calibration curves have to be linear?

Many calibration curves are linear and can be fit with the basic equation y=mx+b, where m is the slope and b is the y-intercept. However, not all curves are linear and sometimes to get a line, one or both set of axes will be on a logarithmic scale.What does it mean when a calibration curve is linear?

A linear calibration curve is a positive indication of assay performance in a validated analytical range. Other characteristics of the calibration curve, including regression model, slope of the line, weighting and correlation coefficient, need to be carefully evaluated.Is a calibration curve straight?

When you calibrate an instrument, you normally only calibrate your instrument over a range where the response is linear. Over a large enough range, all responses are non-linear. Sometimes you need to use a Log transform or create a quadratic model. Most chemists are afraid to use a non-linear calibration curve.Calibration Curves, Blanks, and Method Verification Terminology

Is a standard curve linear?

Linear Standard Curves1A standard curve is a graph relating a measured quantity (radioactivity, fluorescence, or optical density, for example) to concentration of the substance of interest in "known" samples.

What is the purpose of linearity?

Linearity is the ability to provide laboratory test results that are directly proportional to the concentration of the measurand (quantity to be measured) in a test sample. Medical laboratory tests are essential to the clinical management of patients.Can a calibration curve be quadratic?

Conclusion: The most common sources of deviation from Beer's Law, stray light and polychromatic radiation, lead to concave-down non-linearity in the calibration curves, which can be fit approximately (but not exactly) using quadratic or cubic least-squares fits.How do you explain a calibration curve?

The calibration curve is a plot of how the instrumental response, the so-called analytical signal, changes with the concentration of the analyte. The operator prepares a series of standards across a range of concentrations near the expected concentration of analyte in the unknown.How do you do linear calibration?

The equation is in the form “Y = Mx + B,” where M is the slope and B is the y-axis intercept of the straight line. Now that the calibration is complete, let's work on customizing the chart by editing the title and adding axis titles.Is a calibration curve a line of best fit?

A linear calibration curve (line of best fit) is normally calculated using a least squares fitting algorithm. This is what Excel does, it just minimises the different between your points and some line it draws between them and then gives you the equation of that line in the form.Should Elisa standard curve be linear?

We recommend using a sample of known concentration as a positive control. The concentration of the positive control sample should be within the linear section of the standard curve in order to obtain valid and accurate results.What is linearity in analytical method validation?

The linearity of an analytical procedure is its ability (within a given range) to obtain test results which are directly proportional to the concentration (amount) of analyte in the sample.Which dilution technique is more suitable to do a calibration curve?

Calibrations Solutions More Evenly SpacedEvenly spaced calibration standards are easier to prepare using serial dilution. Each successive standard uses a small portion of the previous standard, which is diluted by solvent to generate the next calibration standard in the series.